How would you like to start your analysis?

The sentiment analysis is performed using the "nlptown/bert-base-multilingual-uncased-sentiment" model from Hugging Face. This model is trained on product reviews in multiple languages and utilizes the BERT architecture.

As per the information on the Hugging Face model page, the accuracy of this model for sentiment analysis on English text is approximately 95%. According to our previous experiments on manually annotated Welsh reviews, the accuracy for Welsh is approximately 73%.

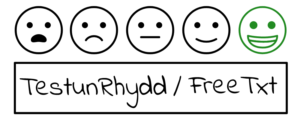

How do you want to categorise the sentiments?

Scatter Plot Overview

In a scatter plot, the single words with the highest sentiment association are displayed. The x- and y-axes show their usage in positive vs. negative and neutral sentiments, respectively.

Colour Coding:

Towards the top-right, the most frequently shared terms between the two sentiments are found, while the bottom-left has the least frequent shared terms.

Score Range:

The range is between -1 and 1, with scores near 0 representing words with similar frequencies in both classes (yellow and orange dots). Scores near 1 are for words more frequent in positive contexts (blue), and scores near -1 for negative contexts (red). Darker shades of blue or red indicate scores closer to their respective extremes.

Interactive Features:

Hovering over the dots on the plot reveals word frequency statistics per 25,000 words for both classes and a Scaled F-Score. This frequency determines each point's plot position. For instance, a given metric might be 195:71 per 25k words. Using the query box or clicking on a dot provides more details, like the frequency per 1,000 Reddit posts ('doc').